Gamma Correction

Gamma correction accounts for the non-linear way human eyes perceive brightness. We're far more sensitive to changes in dark tones than bright ones. In 3D rendering, properly handling gamma-encoded textures (e.g. albedo) versus linear textures like normals ensures physically accurate lighting calculations and realistic visual output.

Gamma encoding allows for a more precise encoding of brightness, to account for how human eyes react to changes in luminance. Gamma defines the relationship between a pixel’s numerical value and its actual luminance. Without gamma, shades captured by digital cameras wouldn’t appear as they did to our eyes.

How Humans Perceive Brightness

Let’s go to the very beginning of an image to understand how brightness is encoded. We take a picture with a camera.

A camera sensor has many photosites which are arranged in a grid - one photosite for each R, G and B of a pixel usually. Each photosite is a well which collects photons, converting them into a electric signal to then encode the pixel. The relative proportion of photons distributed across R, G, and B gives the color and the absolute quantity of photons gives the brightness.

Think of each photosite as “counting” the number of photons that have gathered at its surface. This value (number of photons) is called the luminance of the pixel.

Luminance vs Perceived Brightness

You would think there would be a linear relationship between the actual luminance (the number of photons) detected by the camera and the brightness perceived by our eyes. It would make sense that:

But actually we get something different.

The relationship between luminance and brightness is not linear, but follows a power law, described by this function, with perceived brightness B and luminance L:

where B and L values are between . This 2.2 value is what we call the gamma.

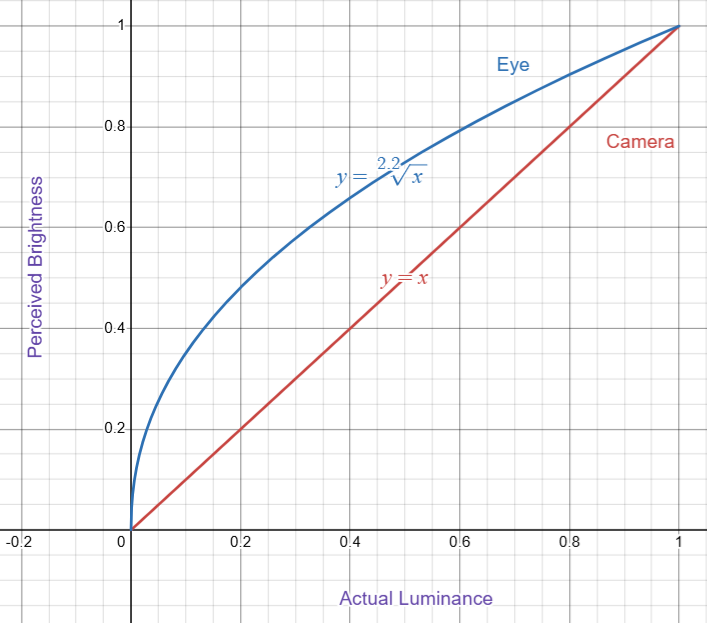

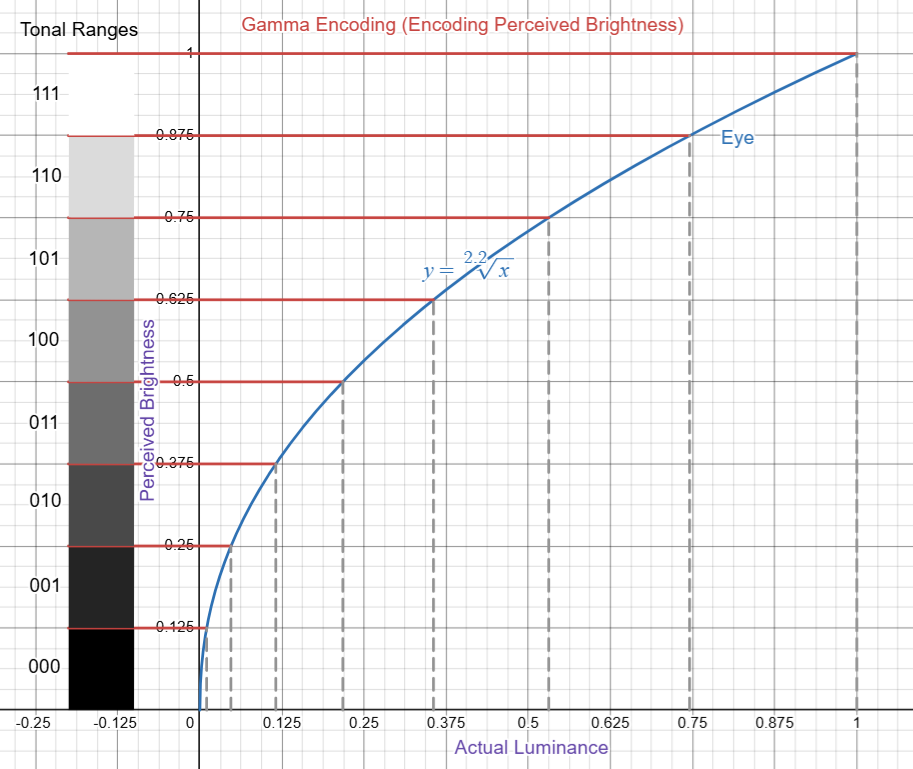

Here is the function plotted:

By looking at this graph we can deduce:

We see great differences in brightness when there are small differences in luminance (in the dark tones). These two animations help clarify:

The tangent represents the instantaneous rate of change at a point. Steeper the slope, the faster the change of y in relation to x. On average you can see that the slopes are steeper in the dark tones ( ) and less steep in the bright tones ( ). Therefore from the graph we can confirm:

Is there an evolutionary reason for this? Our ancestors evolved to see contrast better in the dark, allowing for low-light survival. Being sensitive to dark tones also makes us sensitive to certain contrasts, to better perceive subtle variances in 3D form and depth through shadows.

Encoding Brightness

What is the problem really though? If the image is encoded by the camera in linear space (i.e. the luminance value is encoded), and then our monitor displays it linear space, our eyes will see the image exactly as it appeared outside.

Linear Encoding

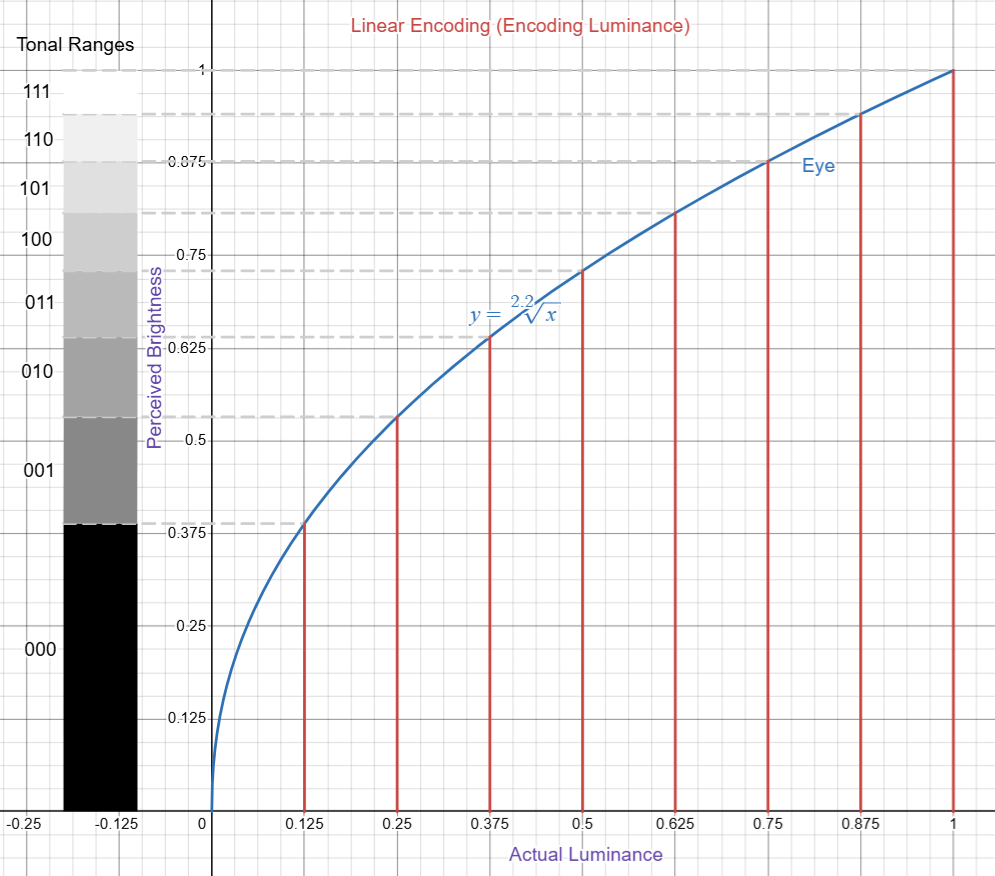

When we encode in linear space, we end up having uneven tonal ranges. By “uneven tonal ranges” I mean the discrete range of brightness that each unique bit value represents will not be equal across different values. The range will be greater in the darker tones and smaller in the lighter tones.

To really outline this, I’ll use the graph again. As a simple example, say we have 3 bits to encode brightness, therefore 8 levels (or tonal ranges). We are linearly encoding, therefore the bit value encoded in the image is linearly proportional to the actual luminance. For a normalized luminance encoded with 3 bits, we get:

Each level describes 1/8 of the total luminance range, but the brightness range is not uniform across levels:

| Encoded Bit Value | Luminance Range Size | Tonal Range Size |

|---|---|---|

000 | 1/8 (0.125) | 0.389 |

001 | 1/8 | 0.144 |

| … | … | … |

110 | 1/8 | 0.064 |

111 | 1/8 | 0.059 |

As you can see from the graph above, tonal ranges are inequal across levels. Over our 8 levels, ~2 levels are used for the darker tones and 6 for the brighter tones. Over 32 levels we would get something like this:

Linear encoding introduces a range of issues:

-

Subtlety of dark tones are insufficiently captured

- Linear encoding wastes precision in bright tones while having insufficient precision in dark tones (where human vision is most sensitive). The subtlety of dark tones will not be accurately captured by the camera when discretizing it in linear space.

-

Banding in shadows

- With limited bit depth (e.g., 8-bit), linear encoding produces visible banding artifacts in dark areas.

-

Encoded value (luminance) is not linear to brightness

- You would expect that doubling the encoding value would result in doubling the brightness, but no since: Twice the luminance < Twice the perceived brightness!

Gamma Encoding

Gamma encoding fixes this issue. To get even perceptual tonal ranges, the camera needs to encode the perceived brightness. With 8 levels (3 bits) we get:

With this the camera will cover all ranges equally and we get:

compared to our linear encoding:

Gamma encoding allows there to be a linear relationship between the encoded value and the perceived brightness. Twice the encoded value will now be equal to twice the brightness.

Additionally, gamma encoding allows for darker tones to be more precisely encoded than with linear encoding, so we can say:

Display Gamma

We’ve established gamma encoding for color images, through its trick of encoding perceived brightness instead of luminance, is the optimal solution for encoding brightness.

Nonetheless, our eyes at the end of day will need to see the actual luminance of the images to perceive the colors accurately. The display must convert our gamma encoded pixels back to the actual luminance. This is called the display gamma, and its main goal is to compensate for the image file’s gamma.

We have that:

Therefore the display value is equal to the encoded value raised to the power of gamma:

Gamma vs Linear Textures in PBR

For the PBR (Physically Based Rendering) textures we handle in 3dverse, we need to properly identify and mark which textures are in sRGB space (gamma-encoded) and which are in linear space (linear-encoded). Note that the sRGB color space primarily uses an effective gamma of 2.2. The following is a good generalization:

| Texture Type | Color space |

|---|---|

| Albedo | sRGB |

| Emission | sRGB |

| Normal | Linear |

| Metallic | Linear |

| Roughness | Linear |

| Ambient Occlusion | Linear |

Albedo and emission textures represent color/light information and are stored in gamma space to match how they're authored—whether captured by cameras or created by artists. The other texture types do not represent color information (e.g. normals, metallic, etc.) and so they are simply stored in linear space.

On the rendering side, those that are gamma-encoded are handled accordingly (converted back to linear space) to ensure correct lighting calculations and visual output.

The rendering pipeline performs lighting calculations in linear space because physics works linearly. When light intensity doubles, the mathematical result should double. If we performed these calculations in gamma space, we'd get physically incorrect results - shadows would be too dark, highlights too bright, and color mixing would be wrong.